Home / Technology / Human-Made Content Needs a Label: The AI Dilemma

Human-Made Content Needs a Label: The AI Dilemma

4 Apr

Summary

- Generative AI's rise prompts calls for labeling human-created content.

- Existing standards like C2PA have proven ineffective for AI content.

- Blockchain and manual verification offer potential solutions for authenticity.

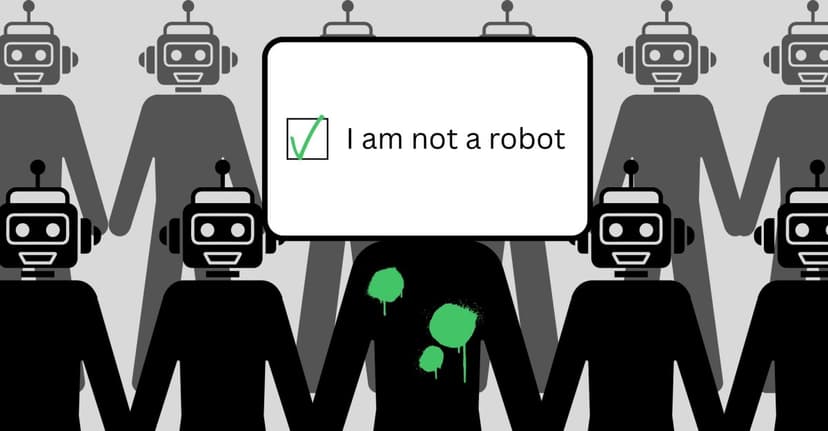

The increasing sophistication of generative AI technology has created a significant challenge: distinguishing human-made content from AI-generated material online. Creators and consumers alike are grappling with a loss of trust as platforms often fail to label AI content, leading to a call for universally recognized labels for human-created works, akin to Fair Trade certifications.

Existing standards, such as the C2PA content credentials, have so far proven ineffective. Many individuals and entities profiting from AI-generated content are motivated to conceal its origins. This has spurred the development of numerous independent labeling solutions, each with varying approaches to verification, from industry-specific certifications to broader platforms.

However, these solutions face hurdles. Some rely solely on trust or unreliable AI detection services, while others require labor-intensive manual verification. Defining 'human-made' becomes complex as AI is integrated into many creative tools. Concepts like blockchain technology offer a more robust path by providing immutable records of human authorship, shifting the focus from AI detection to verifiable digital history.

Ultimately, for any human-made labeling effort to succeed, a unified standard is crucial. This standard would require agreement not only among creators and platforms but also among global governments and regulatory bodies. Without such a concerted effort, the authenticity of online content will continue to be a pressing concern.