Home / Technology / Google's New AI Chips Outshine Nvidia

Google's New AI Chips Outshine Nvidia

22 Apr

Summary

- Google unveils eighth-gen TPUs: TPU8t for training and TPU 8i for inference.

- TPU 8t slashes AI model training time from months to weeks.

- New TPUs offer double the performance per watt over previous generation.

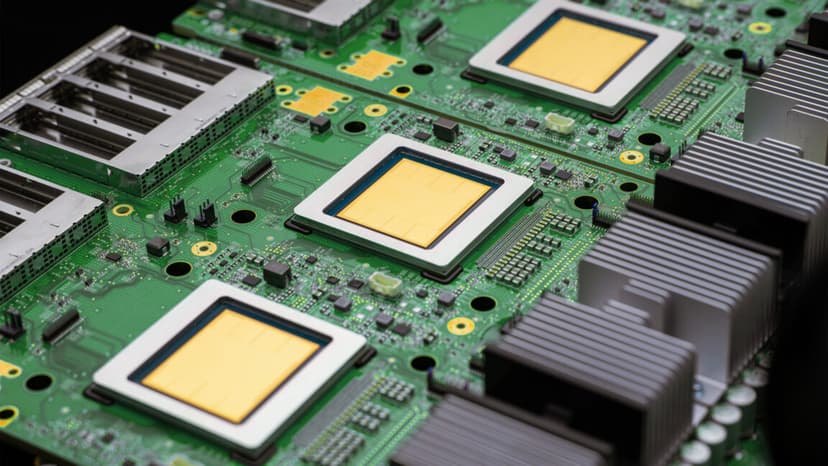

Google has unveiled its eighth-generation Tensor Processing Units (TPUs), designed to accelerate the AI landscape beyond current industry standards. The new chips come in two distinct versions: the TPU 8t, optimized for training artificial intelligence models, and the TPU 8i, tailored for inference tasks.

The TPU 8t promises to revolutionize AI model development by reducing training durations from months to weeks. Google's updated 'pods' now house 9,600 chips, offering a massive computational capacity with up to a million chips in a single cluster.

For inference, the TPU 8i focuses on efficiency, particularly for running multiple specialized agents. It features a significantly increased on-chip SRAM, improving speed for models with longer context windows.

This new generation of TPUs offers substantial efficiency gains, with Google claiming double the performance per watt compared to its predecessor. Google's data centers are also seeing improvements, with integrated networking and more efficient pod layouts enhancing computing power per unit of electricity.

Google's eighth-gen AI accelerators will support its Gemini-based agents and are compatible with popular developer frameworks like PyTorch and TensorFlow, signaling a broad availability for third-party developers.