Home / Technology / Nvidia Sees 50x Token Boost with OpenAI Codex

Nvidia Sees 50x Token Boost with OpenAI Codex

25 Apr

Summary

- Nvidia's Codex deployment offers a 50x token output increase.

- Cost reduction of 35x achieved compared to GPT-4o.

- Over 10,000 employees utilize Codex across various departments.

OpenAI's Codex model, running on GPT5.5, has been successfully deployed across Nvidia, marking a significant AI-native enterprise implementation. This advanced model is now available to more than 10,000 Nvidia employees, reportedly leading to a 35x reduction in expenses and a 50x boost in token output relative to GPT-4o. Employees have described the results as "mind-blowing" and "life-changing."

The deployment has dramatically enhanced productivity. Nvidia reports that debugging processes that previously took days are now completed in hours, and weeks of experimentation are condensed into overnight progress. Complex coding tasks are being managed with greater efficiency and fewer errors, enabling teams to ship features rapidly from natural-language prompts.

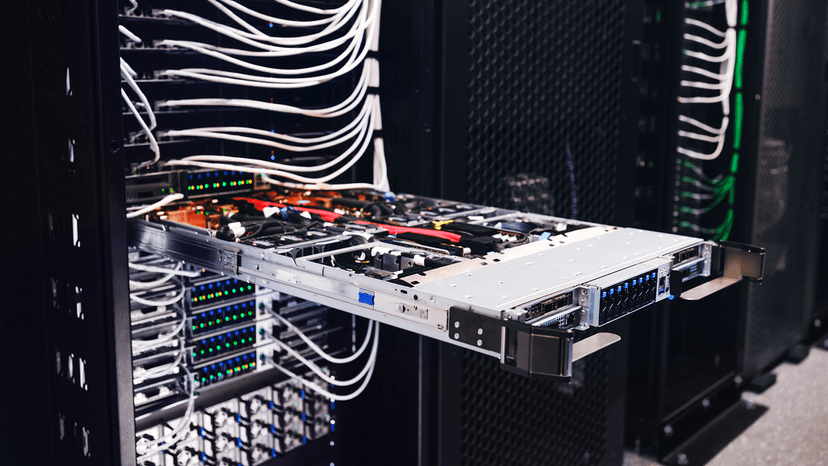

This significant achievement was made possible by deploying Codex on Nvidia's GB200 NVL72 rack-scale systems, which facilitate immense cost savings and improved token efficiency. Nvidia CEO Jensen Huang highlighted that Codex operates as an agent, capable of performing work rather than just answering questions.

Codex's capabilities extend beyond its original function as a coding assistant. It now serves as an agentic assistant across non-technical departments, including product, legal, marketing, finance, sales, HR, and operations. This evolution allows for secure automation of entire workflows within a cloud sandbox, differentiating it from simpler chatbots and rival models like Anthropic's Claude Mythos.