Home / Technology / Nvidia Unveils Groq 3 LPU for AI Chatbots

Nvidia Unveils Groq 3 LPU for AI Chatbots

17 Mar

Summary

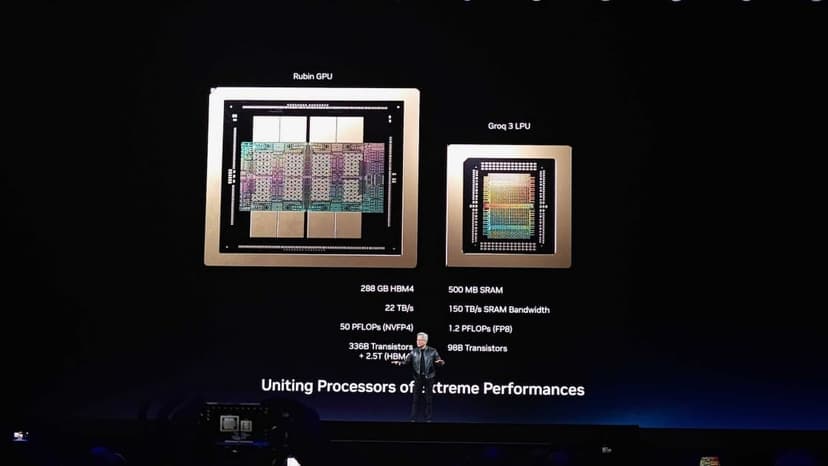

- Nvidia introduces the Groq 3 LPU chip for optimized AI chatbot performance.

- The LPU licenses technology from AI company Groq, founded in 2016.

- LPUs will work with Nvidia's Rubin GPUs for enhanced AI model computations.

Nvidia is set to release its new Groq 3 LPU processor, specifically designed to enhance the performance of large language models and AI chatbots. This development stems from Nvidia's December licensing agreement with Groq, an AI company established in 2016 that specializes in LPU technology for speed and energy efficiency.

The Groq 3 LPU will complement Nvidia's upcoming 'Vera Rubin' platform, which includes Rubin GPUs and Vera CPUs for data centers. While LPUs use faster SRAM with limited capacity, they will be deployed in large batches alongside Nvidia's GPUs. This combined approach aims to optimize AI workloads, particularly for longer prompts, with Nvidia projecting a potential 35x throughput increase for trillion-parameter models.