Home / Technology / Intel, SambaNova Unite for Faster AI

Intel, SambaNova Unite for Faster AI

13 Apr

Summary

- New hardware blueprint combines GPUs, RDUs, and Xeon 6.

- System assigns GPUs to prefill, RDUs to decoding, CPUs to orchestration.

- Available in the second half of 2026 for enterprises.

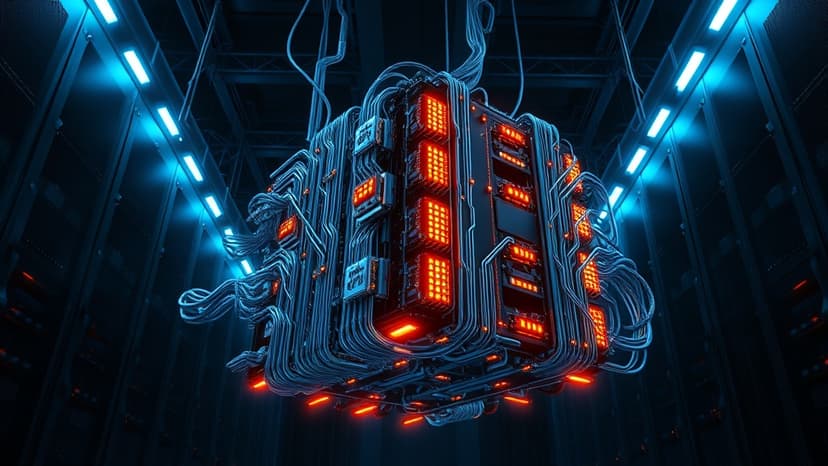

Intel and SambaNova Systems have collaborated on a new hardware blueprint designed to accelerate large-scale AI inference workloads. This architecture combines the strengths of GPUs, SambaNova's Reconfigurable Dataflow Units (RDUs), and Intel Xeon 6 processors.

The system intelligently assigns tasks: GPUs handle the initial prompt conversion into key-value caches during prefill operations. SambaNova RDUs are then employed for the decoding stage, generating tokens with high throughput and low latency.

Intel Xeon 6 processors serve as the system's control and execution layer. They manage workload distribution, execute compiled code, and orchestrate complex interactions between simultaneous processes. This CPU-centric role is critical for agentic AI environments where numerous agents interact concurrently.

This joint solution is scheduled for release in the second half of 2026. It is aimed at enterprises, cloud providers, and sovereign deployments seeking to scale inference without increasing strain on data center resources. The design prioritizes compatibility with existing air-cooled data centers, avoiding the need for new infrastructure builds.