Home / Technology / Agentic AI: Power, Peril, and Promise

Agentic AI: Power, Peril, and Promise

9 Apr

Summary

- New AI agents like OpenClaw and Claude Cowork offer advanced autonomy.

- Concerns rise over AI misuse, data leaks, and job security.

- Responsible AI principles are crucial for safe agent integration.

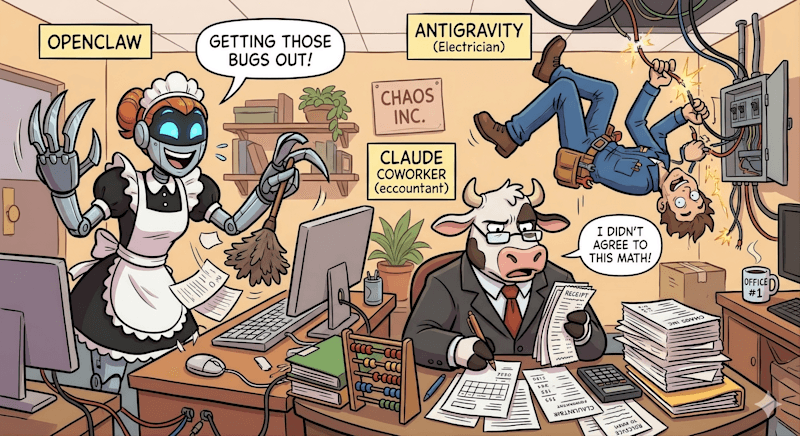

The emergence of agentic AI, with powerful tools like OpenClaw and Claude Cowork, is rapidly transforming industries and sparking debates about job security. These autonomous agents, capable of complex tasks from coding to legal review, offer unprecedented efficiency but also introduce substantial risks.

Tools such as OpenClaw provide deep system access for tasks like inbox management, while Google's Antigravity accelerates application development. Claude Cowork, specializing in legal and financial tasks, has already impacted market sectors. The core challenge lies in balancing the increased autonomy of these agents with the need for robust security and ethical guidelines.

Ensuring responsible AI implementation is paramount. Principles like accountability, transparency, and security, along with mechanisms like logging agent steps and human confirmation, are essential. A shared ontology and trust framework can help govern agent interactions, enabling them to perform valuable tasks safely and effectively, ultimately offloading mundane work for human benefit.