Home / Technology / Agentic AI: Power vs. Peril

Agentic AI: Power vs. Peril

6 Apr

Summary

- Autonomous AI agents like OpenClaw and Claude offer advanced capabilities.

- Increased AI autonomy brings significant risks of misuse and data breaches.

- Responsible AI principles and shared ontologies are crucial for safe integration.

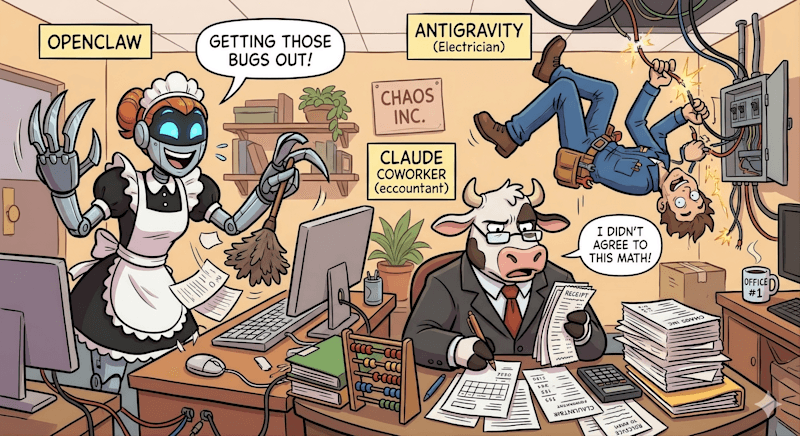

The advent of agentic AI, exemplified by tools like OpenClaw and Google's Antigravity, marks a significant technological leap. These autonomous agents, capable of deep system access or complex coding, promise to automate tasks, from personal organization to application development.

Google's Antigravity acts as a junior developer, accelerating production from prompt to deployment. OpenClaw, an open-source project, functions as a versatile assistant for tasks like inbox triaging and content curation. Anthropic's Claude, enhanced with domain-specific knowledge, now handles complex industry tasks such as legal contract reviews.

While these advancements offer immense potential for efficiency and offloading human cognitive load, they also introduce substantial risks. Increased autonomy amplifies the potential for misuse, data breaches, and unfair advantages. The open-source nature of tools like OpenClaw complicates governance, lacking a central authority.

Ensuring safe integration requires a strong focus on responsible AI principles. Concepts like accountability, transparency, security, and privacy are paramount. Establishing robust guardrails, logging agent steps, and requiring human confirmation are critical to mitigating risks. A shared domain-specific ontology can also define ethical guidelines for agent behavior.

Ultimately, the successful deployment of agentic AI hinges on establishing trust and robust frameworks. When implemented with appropriate controls, these systems can significantly enhance human productivity by handling mundane tasks, allowing the workforce to focus on high-value activities.